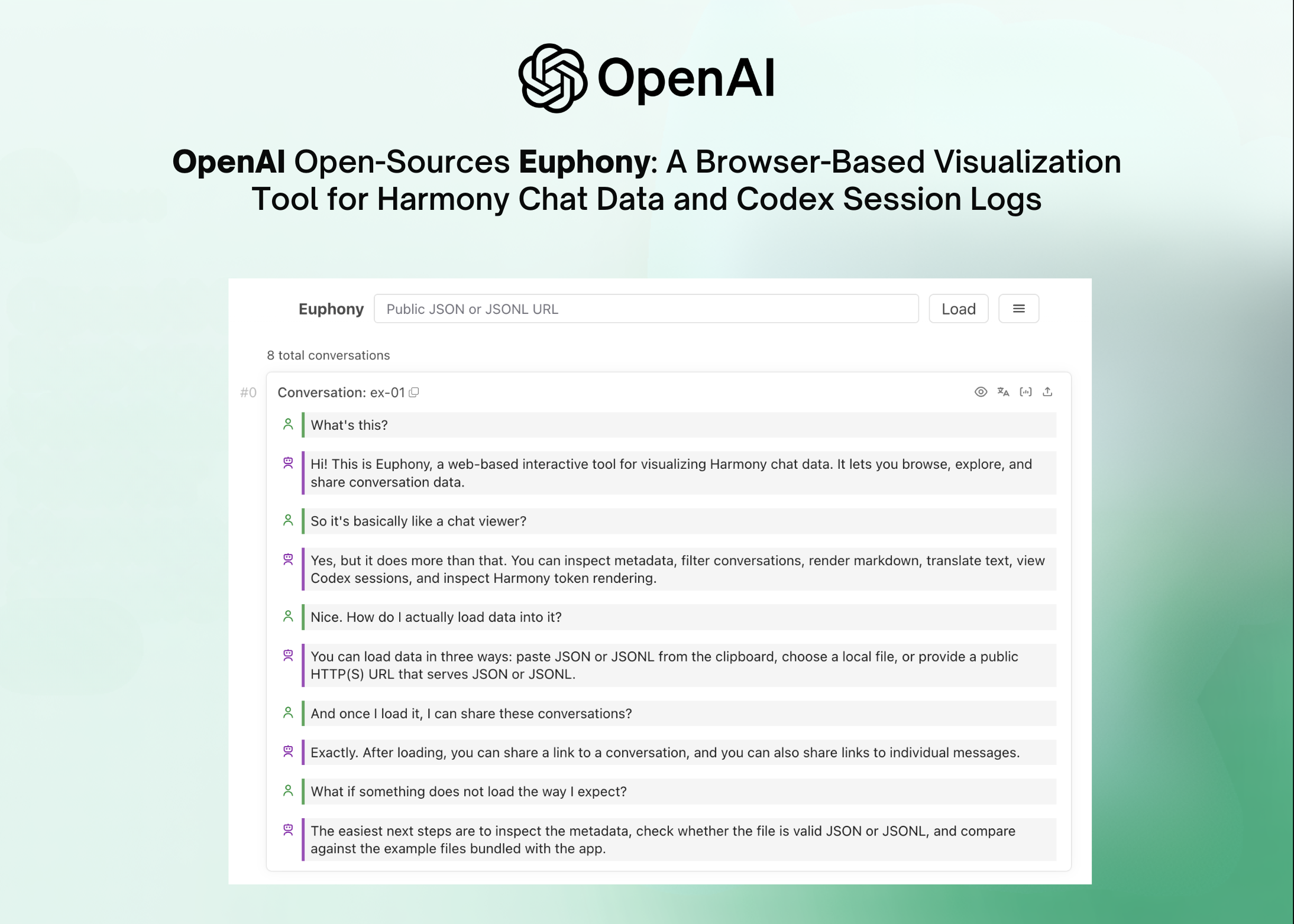

OpenAI Open-Sources Euphony: A Browser-Based Visualization Tool for Harmony Chat data and Codex session logs

Debugging an AI agent that uses multiple steps: reading files, calling APIs, writing code, and updating the output, is not the same as debugging a normal task. Not a single stack trace can be read. Instead, developers are left staring at hundreds of lines of raw JSON, trying to reconstruct what the model was actually thinking and doing at each step. The OpenAI team is taking a direct swing at that problem with the release of the Euphonyis a new open source browser-based visualization tool designed to turn structured chat data and Codex time logs into readable, interactive chat visualizations.

Euphony is built specifically around two OpenAI data formats: Consistency conversations and Codex JSONL session files.

What is Harmony Format?

To understand why Euphony exists, you need a quick primer on Harmony. A series of OpenAI open weight models, gpt-osshe was trained in a special way called i consensus response format. Unlike traditional chat formats, Harmony supports multi-channel output – meaning that a model can express the result of a thought, tool call precursors, and common responses all within one structured conversation. It also supports role-based command editing (system, developer, user, assistant) and named tool namespaces.

The result is that one Harmony conversation saved as .json or .jsonl file can contain more structured metadata than the standard OpenAI API response. This richness helps with training, testing, and agent workflow but also makes raw testing painful. Without using the tool, you read deeply nested JSON objects with token IDs, encoded tokens, and displayed strings all interspersed. Euphony is designed to solve this problem.

What Euphony Actually Does

At its core, Euphony is a web component library and a standalone web application which imports Harmony JSON/JSONL data or Codex session JSONL files and renders them as structured, browser-browsable chat timelines.

The tool supports three ways to load data out of the box: to paste JSON or JSONL directly into the clipboard, it loads locally .json or .jsonl file from disk, or pointing to any public HTTP(S) URL using JSON or JSONL — including Hugging Face dataset URLs. Euphony then automatically detects the format and renders accordingly in all four cases: if JSONL is a list of dialogs, it renders all dialogs; if it finds a Codex session file, it creates a structured Codex session timeline; if a conversation is stored under a top-level field, it executes all conversations and treats other top-level fields as metadata for each conversation; and if none of these match, it falls back to serving the data as raw JSON objects.

The feature set goes far beyond the basic offering. Places to stay in Euphony Conversation level and message level metadata directly from the UI through the metadata inspection panel — useful when inspecting detailed data sets where each dialog holds additional fields such as scores, sources, or labels. It also supports JMESPath based filteringwhich allows developers to reduce large data sets by querying the JSON structure. There is a focus mode that filters visual messages by role, recipient, or content type, a grid view by skimming data sets quickly, and editor mode to directly modify JSONL content in the browser. Markdown rendering (including mathematical formulas) and optional HTML rendering are supported within message content.

Two Modes of Operation: Frontend-Only vs. Backend-Assisted

Euphony is designed with a clean separation of architecture. In frontend mode only (prepared by VITE_EUPHONY_FRONTEND_ONLY=true environmental diversity), the entire application runs in the browser without server dependencies. In backend-assisted modethe local FastAPI Python server handles remote JSON/JSONL loading, backend translation, and Harmony rendering — especially useful for loading large datasets.

Embedding Euphony in your web application

One of the most effective features of AI dev teams is that Euphony moves as custom made reusable items – Standard Web Parts that can be embedded in any front-end framework: React, Svelte, Vue, or plain HTML. After building the library with pnpm run build:library (leaving the main entrance to the ./lib/euphony.js), you can throw a

The technology stack is primarily TypeScript (78.7% of the codebase) with CSS and a Python backend layer, and is released under Apache License 2.0.

Key Takeaways

- OpenAI with OpenAI open sourcebrowser-based visualization tool that converts raw Harmony JSON/JSONL chat and Codex session JSONL files into structured, browseable chat timelines — no custom log parsers required.

- Euphony supports four automatic detection methods: sees Harmony chat lists, Codex session files, chats found under high-level fields, and returns arbitrary data as raw JSON objects.

- The tool comes with a rich testing feature set — including JMESPath filtering, focus mode (filter by role, recipient, or content type), conversation-level and message-level metadata inspection, dataset skimming grid views, and an in-browser JSONL editor mode.

- Euphony works in two ways: a frontend-only mode recommended for static or external hosting, and an optional local backend mode powered by a FastAPI server that adds remote JSON/JSONL loading, backend translation, and Harmony rendering — with OpenAI clearly warning against exposing the backend externally due to SSRF risk.

- Euphony is designed to be embedded: shipped as reusable web parts (

Check it out GitHub Repo and Demo. Also, feel free to follow us Twitter and don’t forget to join our 130k+ ML SubReddit and Subscribe to Our newspaper. Wait! are you on telegram? now you can join us on telegram too.

Need to work with us on developing your GitHub Repo OR Hug Face Page OR Product Release OR Webinar etc.? contact us