Meet ‘AutoAgent’: An Open Source Library That Enables AI Developers to Develop Their Own Agents Instantly

There is a certain type of tedium that every AI developer knows intimately: the fast tuning loop. You write the system information, run your agent against the benchmark, read the failure sequence, fix the information, add the tool, restart. Repeat this a few times and you may move the needle. It’s a grunt task wrapped in Python files. Now, a new open source library is called AutoAgentcreated by Kevin Gu at thirdlayer.inc, suggests a more stable alternative — don’t do the work yourself. Let the AI do it.

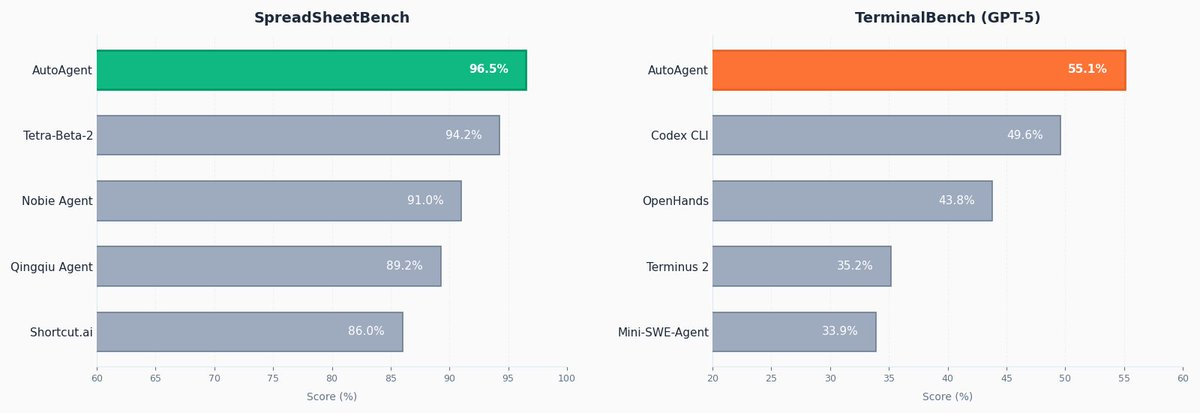

AutoAgent is an open source library for automatically developing an agent for any domain. Within 24 hours, it hit #1 on SpreadsheetBench with a score of 96.5%, and achieved the #1 GPT-5 score on TerminalBench with 55.1%.

What is AutoAgent, Really?

AutoAgent is described as ‘like autoresearch but for agent developers.’ The idea: give an AI agent a job, let it build and iterate on the agent that automatically binds overnight. Configures system information, tools, agent configuration, and orchestration, runs benchmarks, checks score, saves or discards changes, and repeats.

To understand the analogy: Andrej Karpathy’s autoresearch it does the same with ML training – it goes through test-train cycles, keeping only the changes that improve the validation loss. AutoAgent ports that same ratchet loop from ML training to agent engineering. Instead of optimizing model weights or training hyperparameters, it optimizes harnesses – system information, tool definitions, routing logic, and tuning strategy that determine how the agent behaves in the task.

A harnessesin this context, the scaffolding around LLM: which system receives it, which tools it can call, how it flows between sub-agents, and how tasks are formatted as inputs. Most agent developers build this framework by hand. AutoAgent automatically iterates on that scaffold itself.

Architecture: Two Agents, One File, One Command

The GitHub repo has a deliberately simple structure. agent.py the entire harness under test in one file – contains configuration, device definitions, agent registration, routing/singing, and Port adapter boundary. The adapter part is clearly marked as repaired; the rest is the meta-agent’s primary programming environment. program.md it contains the meta-agent directives and the directive (what kind of agent to create), and this is the only file for human editing.

Think of it as a separation of concerns between man and machine. Someone is setting up guidance inside program.md. I the meta-agent (a different, higher-level AI) then reads that instruction, checks it agent.pyruns the benchmark, identifies what failed, rewrites the relevant parts of the agent.pyand repeats. One never touches agent.py directly.

An important piece of infrastructure that keeps the loop consistent between iterations results.tsv — an audit log automatically created and maintained by the meta-agent. It tracks all test executions, giving the meta-agent a history to learn from and gauge what to try next. The overall structure of the project includes Dockerfile.baseoptional .agent/ a directory of usable agent artifacts for the workspace such as information and capabilities, a tasks/ benchmark payload folder (added with each benchmark branch), and a jobs/ directory of port job results.

The metric is the total number of points generated by the test suites for the benchmark function. The meta-agent hill-climbs in this school. Every test produces a numerical score: keep if it’s better, discard if not – the same loop as automated research.

Function Format and Port Integration

Benchmarks are presented as functions in an airport format. Each work lives below tasks/my-task/ and includes a task.toml configuration such as expiration and metadata, i instruction.md which notice is sent to the agent, a tests/ directory with test.sh entry point that takes notes /logs/reward.txtand a test.py for verification using deterministic checks or LLM-as-judge. An environment/Dockerfile defines a function container, and a files/ directory holds reference files included in the container. The test writes a score between 0.0 and 1.0 in the validation log. A meta-agent hill-climbing this time.

I LLM-as a judge the pattern here is worth flagging: instead of testing responses only deterministically (like unit testing), the testing team can use another LLM to test whether the agent’s output is ‘good enough.’ This is common in agent benchmarks where correct responses are not reducible to string matching.

Key Takeaways

- Independent wire engineering works – AutoAgent proves that a meta-agent can replace the tuning loop quickly, continuously

agent.pyovernight without anyone touching the harness files directly. - Benchmark results validate the method – Within 24 hours, AutoAgent scored #1 on SpreadsheetBench (96.5%) and the top GPT-5 score on TerminalBench (55.1%), beating all other manual entries.

- ‘Exemplary empathy’ may be a real phenomenon — A Claude meta-agent that makes a Claude job agent appear to diagnose failures more accurately than when configuring a GPT-based agent, which suggests that matching the same model family can be important when designing your AutoAgent loop.

- A person’s career goes from being an engineer to being a director — You don’t write or edit

agent.py. He writesprogram.md– a simple Markdown directive that guides the meta-agent. The difference reflects a broader shift in agent engineering from coding to policy setting. - Plug-and-play with any benchmark – Because tasks follow the open format of Harbor and agents run in Docker containers, AutoAgent is domain-agnostic. Any standard task – spreadsheets, terminal commands, or your custom domain – can be a target for optimization.

Check it out Repo again Tweet. Also, feel free to follow us Twitter and don’t forget to join our 120k+ ML SubReddit and Subscribe to Our newspaper. Wait! are you on telegram? now you can join us on telegram too.

Need to work with us on developing your GitHub Repo OR Hug Face Page OR Product Release OR Webinar etc.? Connect with us