Top 19 AI tools for the red team (2026): Protect your ML models

What is AI Red Teaming?

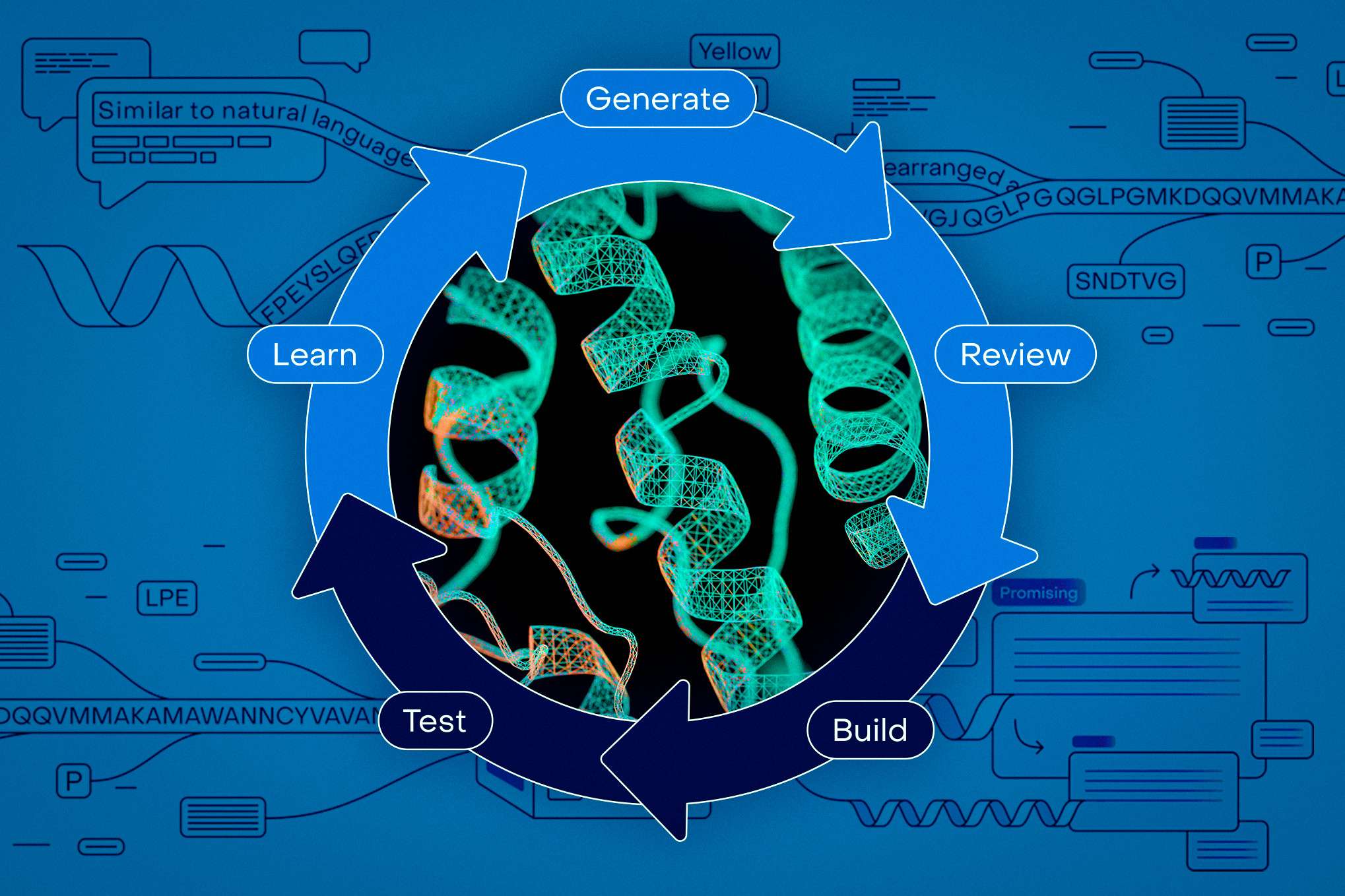

AI Red Teaming is the process of systematically testing artificial intelligence systems—especially generative AI and machine learning models—against adversary attacks in security stress scenarios. The red team passes the classic entrance test; while penetration testing targets known software flaws, the team red checks for AI-specific vulnerabilities, unexpected vulnerabilities, and emerging behaviors. The process adopts the concept of a malicious adversary, simulating attacks such as rapid injection, data poisoning, jailbreaking, model evasion, bias exploitation, and data leakage. This ensures that AI models are not only robust against traditional threats, but also resilient to new abuse scenarios that are unique to current AI systems.

Key Features and Benefits

- Threat Modeling: Identify and simulate all possible attack scenarios—from rapid injection to adversary deception and data extraction.

- Anti-Realistic Behavior: Simulates real attacker techniques using both manual and automated tools, beyond what is covered in penetration testing.

- Risk Discovery: Reveals risks such as biases, fairness gaps, privacy exposures, and integrity failures that may not have surfaced in previous release tests.

- Compliance with the Law: Supports compliance requirements (EU AI Law, NIST RMF, US Executive Orders) in terms of authorizing red collaboration for high-risk AI deployments.

- Ensuring Continuous Safety: Integrates into CI/CD pipelines, enabling continuous risk assessment and resilience development.

Red collaboration can be done by internal security teams, specialized third parties, or platforms designed solely for adversarial testing of AI systems.

Top 19 AI Red Teaming Tools (2026)

Below is a rigorously researched list of the latest and most respected AI red team tools, frameworks, and platforms—including open source, commercial, and industry-leading solutions for both general and AI-specific attacks:

- Mindgard – Red team AI automation and model vulnerability assessment.

- MIND.io – A data security platform that provides independent DLP and data discovery and response (DDR) for Agent AI.

- Garak – An open source LLM adversary analysis toolkit.

- HiddenLayer- A comprehensive AI security platform that provides automated model scanning and red collaboration.

- AIF360 (IBM) – AI Fairness 360 toolkit for assessing bias and fairness.

- Foolbox – A library for attacking enemies in AI models.

- Penligent- An AI-powered penetration testing tool that doesn’t require expert knowledge

- Giskard- A comprehensive evaluation of traditional machine learning models and Agentic AI

- Adversarial Robustness Toolbox (ART) – IBM’s open source toolkit for ML model security.

- FuzzyAI- A powerful automated LLM fuzzing tool

- BurpGPT – Web security automation using LLMs.

- CleverHans – Estimating ML attacks.

- Counterfit (Microsoft) – CLI for testing and simulating ML model attacks.

- Dreadnode Crucible – ML/AI vulnerability detection and red team toolkit.

- Galah – An AI honeypot framework that supports LLM use cases.

- Meerkat – Data visualization and adversarial ML testing.

- Ghidra/GPT-WPRE – Reverse engineering platform with LLM analysis plugins.

- Guardrails – Application security for LLMs, to prevent immediate injection.

- Snyk – A developer-focused LLM red tool that simulates quick injection and attacks from your opponents.

The conclusion

In the era of spawning AI and Large Language Models, AI Red Teaming has become the basis for responsible and robust AI deployment. Organizations must embrace adversary assessment to uncover hidden vulnerabilities and adapt their defenses to new threats—including agile engineering-driven attacks, data leaks, biased exploits, and emerging behavioral patterns. The best practice is to combine manual expertise with automated platforms using the top red integration tools listed above to get a full, effective security posture for AI systems.

Look at ours Twitter page and don’t forget to join our 130k+ ML SubReddit and Subscribe to Our newspaper. Wait! are you on telegram? now you can join us on telegram too.

Need to work with us on developing your GitHub Repo OR Hug Face Page OR Product Release OR Webinar etc.? contact us

Michal Sutter is a data science expert with a Master of Science in Data Science from the University of Padova. With a strong foundation in statistical analysis, machine learning, and data engineering, Michal excels at turning complex data sets into actionable insights.