The AI Vision: How to Train for High-Quality Results in the Real World

The idea of AI is moving from demos to production. It’s used to test products, monitor locations, support security workflows, and help systems understand what’s happening in photos and video streams. As deployments grow, the cost of poor training increases. A model that works well in a clean test set can still break down in the real world when lighting changes, objects overlap, or the environment changes over time.

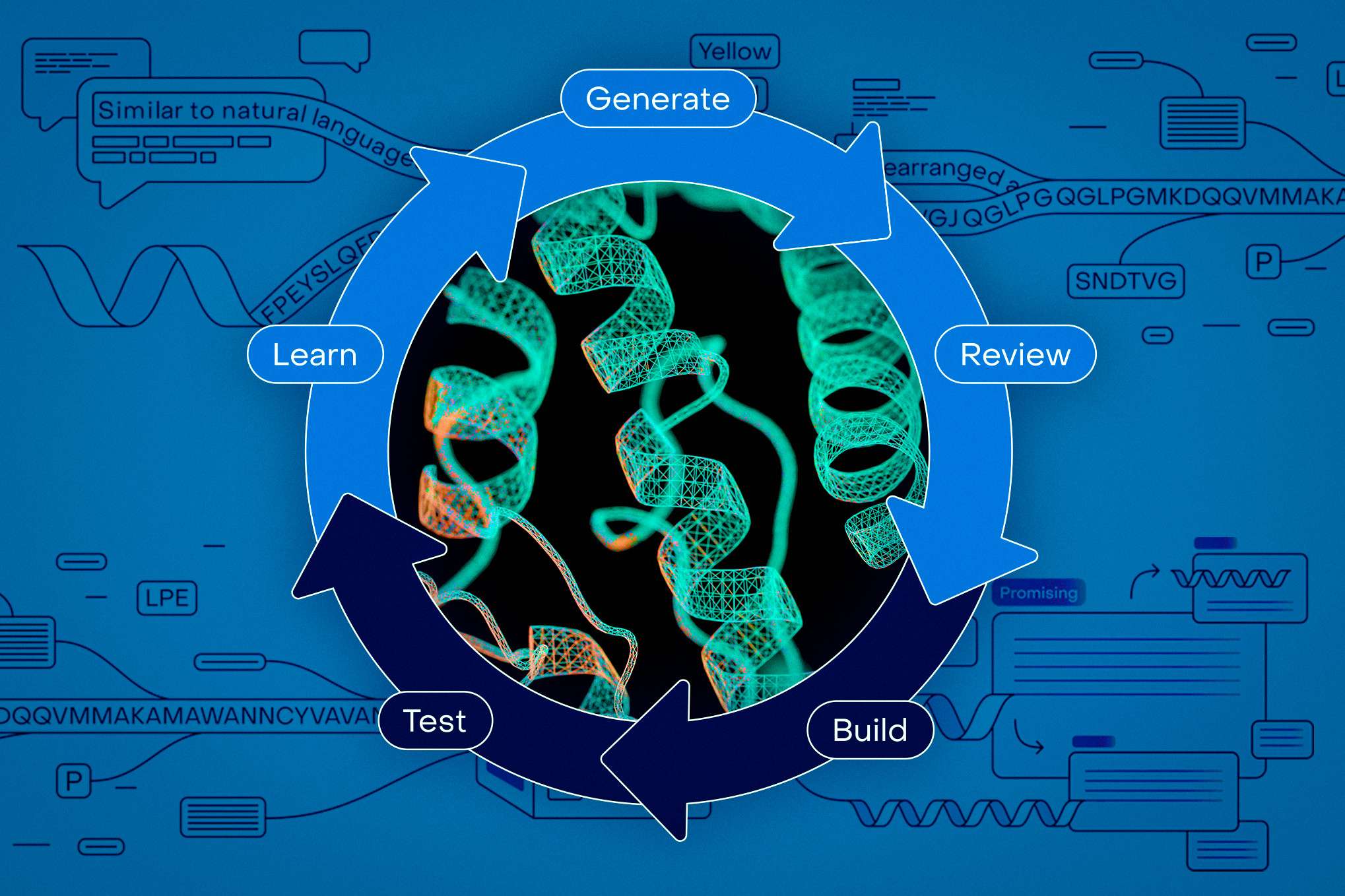

That’s why the most effective vision AI programs often look less like one-time model training and more like performance discipline. They include robust data collection, clear annotation rules, domain expertise, artificial intelligence where it helps, and ongoing monitoring after implementation. The goal is not just high accuracy on paper. It’s a reliable performer when the scene gets rough.

Why training quality is more important than a new model

Most teams start out by focusing on architecture. That’s important, but from an AI perspective, data quality often determines whether a project makes it to production. If your images are labeled inconsistently, your defect categories are unclear, or your edge cases are missing, the model is learning a fuzzy version of the truth.

A simple analogy is teaching someone to referee a game using only highlight clips. They may see obvious plays, but they will struggle with bad angles, partial views, and boundary calls. The AI concept behaves similarly. It requires more than appropriate examples. It needs strong cases too.

Start with the data, not the dashboard

Before training begins, define what the model is to be seen and what counts as success. That means deciding whether the task is object detection, classification, classification, tracking, fuzzy detection, or spatial understanding. It also means agreeing on label definitions in advance.

For example, if a system is intended to flag accidents on a production line, what exactly qualifies as an accident? Is partial occlusion a label? Does glare count as a bad example or a special case? This information shapes the dataset long before it shapes the model.

This is where services like data collection, data annotation, and data support for computer vision training become very important. A robust upstream workflow helps teams standardize image formats, gather broad coverage, and reduce ambiguity before it spreads.

Why normal labeling is not normal enough

General annotations are useful for specific tasks, but the idea of high-value AI is often context-dependent. A manufacturing specialist may catch subtle defect patterns that look normal to the average reviewer. A security professional can distinguish between normal movement and reasonable risk. A medical reviewer may see why one imaging pattern is important while another is not.

That difference is most clearly seen in the edge cases. The most difficult mistakes in AI theory tend to occur in ambiguous, unusual, or high-level situations. That’s why domain knowledge labeling is so important when teams move from prototypes to production.

Artificial data is useful, but only if it is used with purpose

Artificial imagery and video can help when real-world data is scarce, dangerous, expensive, or slow. They are especially useful for rare errors, dangerous situations, and poorly represented situations. But artificial data is not magic. If it is too clean or too small, the model can be good at simulating reality and poor at actual reality.

The best use of artificial data is often to increase targeting. It fills in gaps, increases contrast, and prepares to model events that don’t happen often enough in real images.

Train for the context of the scene, not just the presence of the object

An AI-enhanced vision system does more than see things in pixels. It interprets what is happening in context. A crowded corridor may be normal at one hour and a danger signal at another. A stationary vehicle may be harmless in one area and dangerous in another. A feature can only be important if it is combined with a specific location, movement pattern, or performance situation.

This is why high-quality systems increasingly rely on rich labeling and analysis techniques rather than relying on a single small performance result.

A little story: when the model looked so accurate it came to night work

Imagine a retailer using AI vision to identify spill hazards and blocked aisles. During the pilot test, the results appear to be strong. Daytime images are clear, labels are organized, and the model captures the most obvious stories.

Then the night shift begins. The light is dim. The appearance of the floor is changing. The cleaning carts slightly block the camera’s view. Workers are moving in a different direction. All of a sudden, the system reads the real risks and flags a safe operation.

There was nothing so wrong with the original model that it wasn’t perfect. The training data showed one type of area, not the full area. When the team added nighttime images, edge annotations, and reviewer feedback from store operators, performance improved because the model eventually learned from the situations it would encounter.

Decision framework: when to add more data, more experts, or more feedback

An effective way to develop an AI vision is to ask four questions:

- What types of misses are most important?

False negatives are especially important in security, healthcare, sales, and manufacturing. - What conditions are underrepresented?

Look for variations in lighting, motion blur, shutters, seasonal changes, changing camera angles, and rare events. - Where does human judgment change the label?

This is where subject matter experts come in handy. - What will you monitor after implementation?

Accuracy is not enough. Teams must watch for missed rates, drift, delays, and performance under changing real-world conditions.

What do AI tasks look like for a good idea

The strongest training programs often share a few habits. They standardize the data before labeling. They create annotation guides with different examples and rules. They add QA checks instead of assuming all labels are equally reliable. They use artificial data to fill logical gaps, not to replace the real one. They also create post-deployment feedback loops so operators can flag misses and feed that information back into retraining.

This is also why many teams treat vision projects as continuous data operations rather than discrete model tests. A robust infrastructure for training data, updates, and update cycles makes it easy to keep models useful as the world changes around them.

The conclusion

High-quality results from an AI perspective do not come from scale alone. They come from better judgment about what to collect, how to label, where to use experts, when to simulate edge cases, and how to measure performance after deployment.

In other words, the idea of training AI is not like filling a tank. It’s like coaching a team by changing game conditions. The best systems are trained on real-world examples, challenged with difficult situations, and continuously improved once they enter the field.