Artificial intelligence

Bias Testing Coding Guide Using Facebook Audit Balance with IPW CBPS Standard and Post Editing Methods

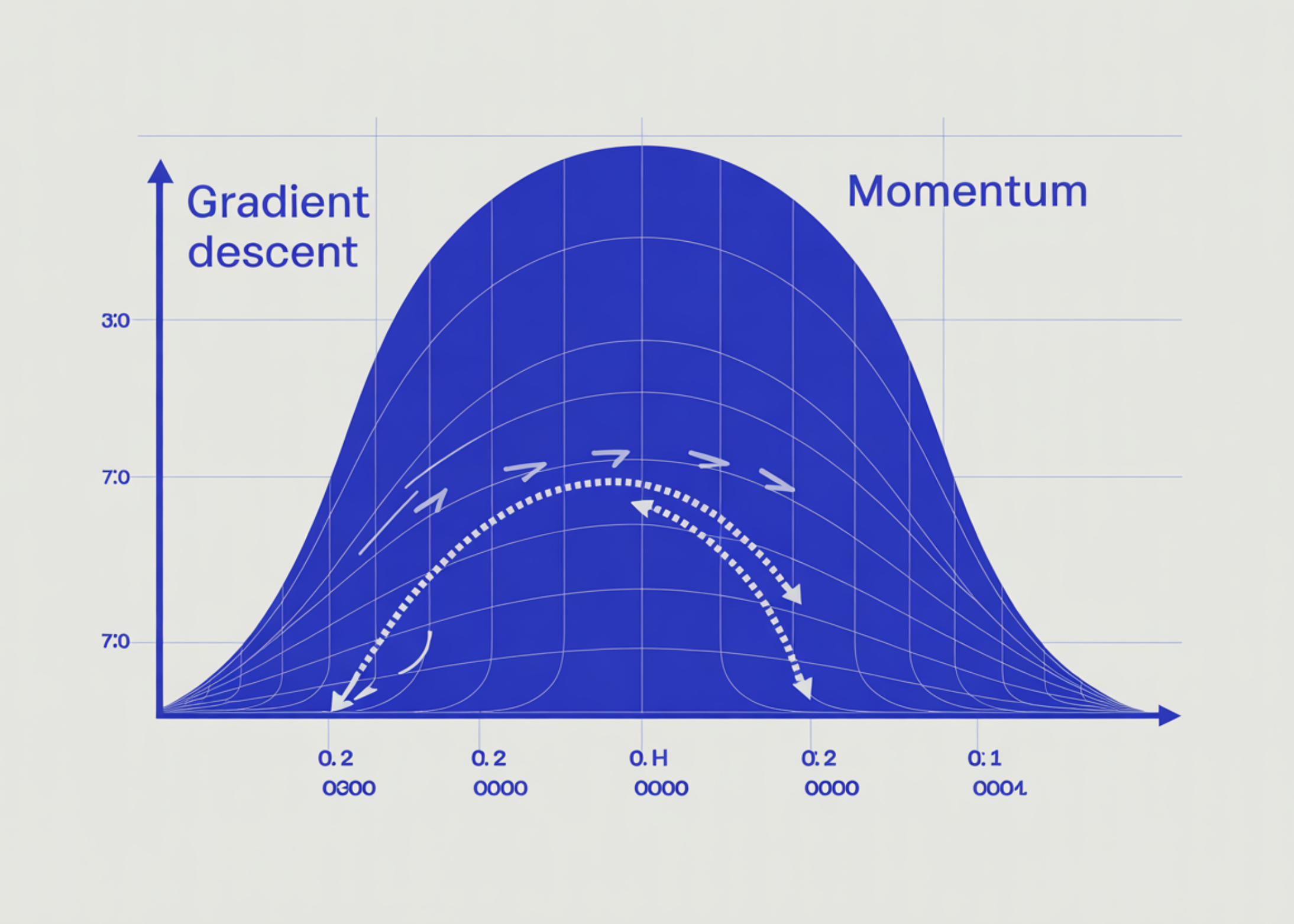

fig, axes = plt.subplots(2, 2, figsize=(14, 10))

colors_a = ["gray", "#1f77b4", "#ff7f0e", "#2ca02c", "#d62728"][: len(asmd_means)]

axes[0, 0].bar(list(asmd_means.keys()), list(asmd_means.values()), color=colors_a)

axes[0, 0].axhline(0.1, ls="--", color="red", label="0.10 imbalance threshold")

axes[0, 0].set_title("Mean ASMD across covariates")

axes[0, 0].set_ylabel("Mean ASMD"); axes[0, 0].legend()

axes[0, 0].tick_params(axis="x", rotation=20)

truth = target_df["happiness"].mean()

colors_b = ["#888"] + ["#1f77b4", "#ff7f0e", "#2ca02c", "#d62728"][: len(methods)] + ["black"]

axes[0, 1].bar(list(outcome_means.keys()), list(outcome_means.values()),

color=colors_b[: len(outcome_means)])

axes[0, 1].axhline(truth, ls="--", color="black", label=f"truth = {truth:.2f}")

axes[0, 1].set_title("Estimated mean happiness vs ground truth")

axes[0, 1].set_ylabel("Mean happiness"); axes[0, 1].legend()

axes[0, 1].tick_params(axis="x", rotation=20)

w_ipw = adjusted_ipw.to_df()["weight"].values

axes[1, 0].hist(w_ipw, bins=40, color="steelblue", edgecolor="white")

axes[1, 0].set_title(

f"IPW weight distributionn"

f"min={w_ipw.min():.2f} median={np.median(w_ipw):.2f} max={w_ipw.max():.2f}"

)

axes[1, 0].set_xlabel("weight"); axes[1, 0].set_ylabel("count")

ages = sample_df["age"].values

bins = np.linspace(18, 90, 31)

axes[1, 1].hist(target_df["age"], bins=bins, density=True, alpha=0.45,

color="green", label="Target (truth)")

axes[1, 1].hist(ages, bins=bins, density=True, alpha=0.45,

color="red", label="Sample (biased)")

axes[1, 1].hist(ages, bins=bins, density=True, alpha=0.45,

color="blue", weights=w_ipw, label="Sample (IPW-weighted)")

axes[1, 1].set_title("Age distribution: bias correction by IPW")

axes[1, 1].set_xlabel("Age"); axes[1, 1].set_ylabel("density"); axes[1, 1].legend()

plt.tight_layout()

plt.savefig("balance_diagnostics.png", dpi=110, bbox_inches="tight")

plt.show()

print("n" + "=" * 60)

print(" ADVANCED — controlling variance with max_de")

print("=" * 60)

print("max_de=1.5 trims extreme weights so the design effect stays ≤ 1.5,")

print("trading a little bias for tighter confidence intervals.n")

adjusted_trim = sample_with_target.adjust(method="ipw", max_de=1.5)

print(adjusted_trim.summary())

out = adjusted_ipw.to_df()

out.to_csv("balance_weighted_sample.csv", index=False)

print("nSaved weighted sample → balance_weighted_sample.csv")

print("Saved diagnostics plot → balance_diagnostics.png")

print("nFirst 5 rows of weighted output:")

print(out.head())

err_naive = abs(sample_df["happiness"].mean() - truth)

err_ipw = abs(outcome_means["IPW"] - truth)

print("n" + "=" * 60)

print(" BIAS REDUCTION SUMMARY")

print("=" * 60)

print(f"Naive estimator error : {err_naive:.3f}")

print(f"IPW estimator error : {err_ipw:.3f}")

print(f"Bias reduction : {(1 - err_ipw / max(err_naive, 1e-9)) * 100:.1f}%")