Why Downtime Is Still a Surprise for IT Teams

The time off should be predictable now. With advanced monitoring systems, cloud infrastructure and AI-driven analytics, IT teams are better equipped than ever. Yet outages happen without warning and when they do, they disrupt operations, damage customer trust, and cost real money.

So what’s going on?

The truth is, downtime is usually not caused by a single failure. It is the result of hidden gaps that build up in all programs, tools, and processes. Let’s unpack why IT teams are still stuck.

The Illusion of Perfect Appearance

Many organizations believe they have full visibility into their systems. Dashboards are activated, alerts are configured, and logs are captured in real-time.

But visibility doesn’t always mean clarity.

Teams tend to rely on CPU usage for split metrics, memory load, latency spikes. These signs, while helpful, do not tell the full story. Without context, they become noisy rather than insightful.

Here’s where the gap comes in:

- Metrics are monitored individually, not in groups

- Notifications start after the impact starts, not before

- Root cause analysis becomes proactive rather than reactive

What this means is simple: teams see data, but they don’t always understand it.

Complex Growth, Shrinkage Control

Modern IT environments are no longer straightforward. Applications are distributed across microservices, containers, and third-party APIs. Each component presents its own dependencies and risks.

With this complexity comes a serious challenge—control is no longer centralized.

Key facts of modern infrastructure:

- A single user request can go through multiple services

- A failure in one part can trickle down the entire system

- Dependence is often out of direct control

As systems evolve, identifying the source of failure becomes difficult. When something breaks, it feels sudden but in reality, it is the result of interconnected weaknesses.

Tool Overload Creates Blind Spots

Ironically, having too many tools can make teams ineffective.

Organizations often use multiple platforms for monitoring, logging, performance tracking, and cloud management. While each tool has a specific purpose, they rarely integrate seamlessly.

This leads to:

- Classified data across platforms

- Lack of an integrated system view

- Slow incident diagnosis

Instead of rushing fixes, teams spend valuable time switching between tools and integrating information. By the time clarity appears, the downtime has already increased.

Warning Fatigue Slows Reaction

Alerts are meant to help—but too many alerts do the opposite.

When developers are bombarded with constant notifications, they start sorting through them mentally. Low priority or false positives reduce priority.

The worst consequences are:

- Important warnings are ignored

- Response times are increasing

- Early signs of failure are ignored

Over time, groups stop trusting warnings altogether. And when a real problem arises, it doesn’t get the attention it deserves until it’s too late.

Reactive Mindset Over Proactive Strategy

Most IT teams still work in reactive mode. Systems are monitored, but not challenged.

Failures often occur in unexpected circumstances—traffic surges, rare bugs, or third-party interference. Without concrete testing, these conditions remain untested until they occur in production.

Common spaces include:

- Limited use for load testing or chaos engineering

- Overreliance on pre-shipment testing

- Lack of simulation of real-world stressful situations

What this shows is a deep problem: stability is assumed, not continuously guaranteed.

The Dangers of Addiction Are Often Undetected

Today’s applications are highly dependent on external service payment gateways, APIs, cloud platforms. While this integration enables scalability, it also introduces risk.

The problem is that this dependency is often overlooked.

Key challenges:

- Limited visibility into third party operations

- There is no external failure control

- Delay in detection of upstream problems

If a dependency fails, it affects your system immediately. But because the problem comes from somewhere else, it is difficult to recognize and solve it quickly.

Fragile Incident Response Frameworks

Even if problems are detected early, the response can be short-lived.

In high-pressure situations, uncertainty becomes a major obstacle. Teams may not have clearly defined roles, escalation paths, or recovery strategies.

This causes:

- Confusion at critical times

- Decision making is delayed

- Long rest

Incident response isn’t just about tools—it’s about preparation. Without regular practice and clear goals, even talented teams struggle under pressure.

Over-Reliance on Automation

Automation has transformed IT operations, but it is not a safety net for everything.

Automated scaling, failure modes, and self-healing systems reduce manual effort—but they can also hide underlying problems.

If default fails:

- Problems develop faster than expected

- The causes are difficult to identify

- Systems behave in unpredictable ways

In some cases, automation can be even worse off by reacting inappropriately to unusual situations.

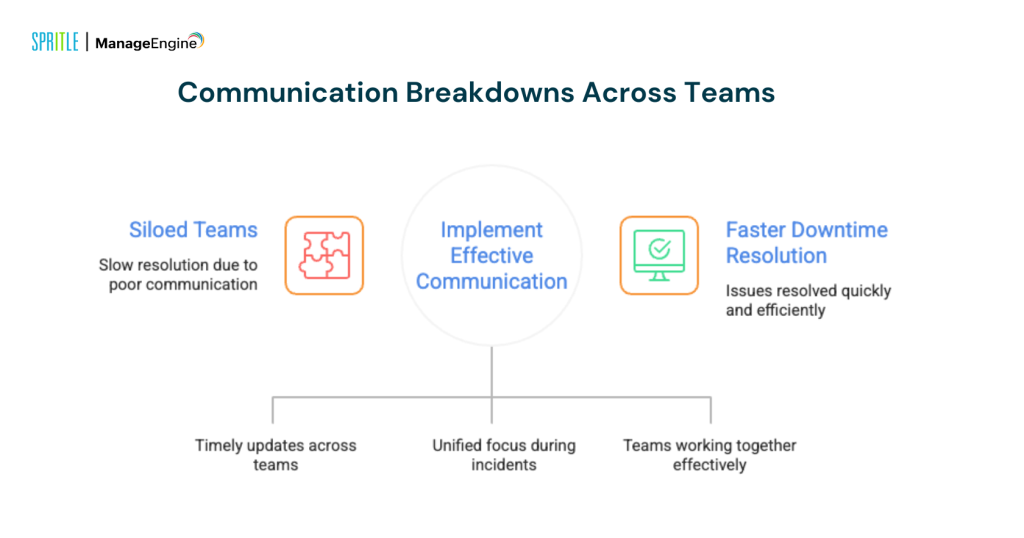

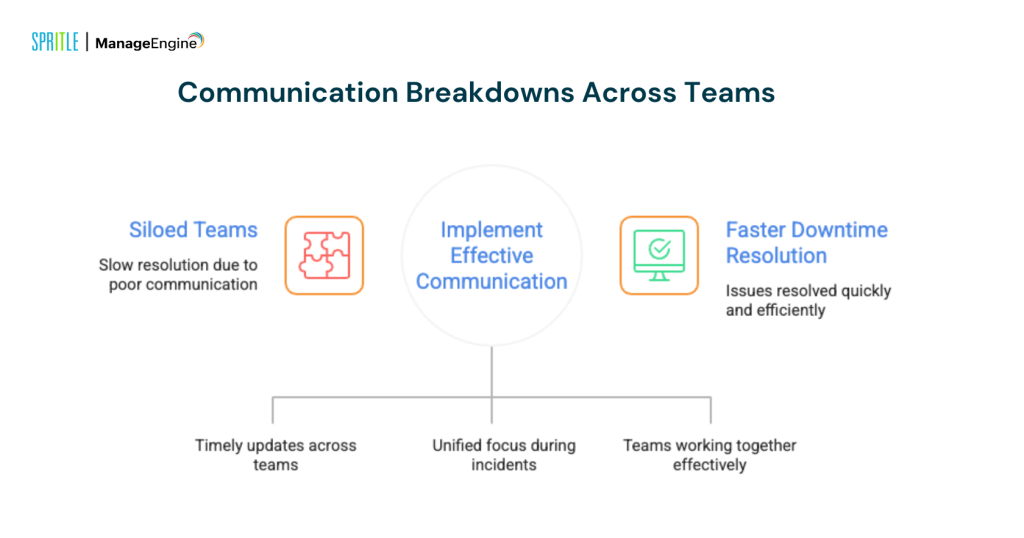

Communication Differences Across Groups

Time off is rarely tied to one group. It usually affects development, operations, infrastructure, and support.

If the communication is seamless, the resolution is reduced.

Common communication challenges:

- Integrated teams work independently

- Delayed information sharing

- Incorrect priority during incidents

Effective communication is as important as technical expertise. Without you, even small matters can turn into a big turn off.

Postmortems Without Action

After every exit, it is often reviewed. The main causes are known, and the studies are documented.

But documents alone do not prevent future failures.

The real problem lies here:

- Action items are not performed

- Process development is delayed

- The same dangers remain in the system

As a result, the same outage occurs again—unexpected, but avoidable.

What Needs to Change?

Preventing dramatic downtime isn’t about adding more tools—it’s about changing the way systems are managed.

High impact improvements include:

- From proactive alerts to predictive intelligence

- Integrated monitoring for a complete system view

- Continuously checking for failure conditions

- Actively monitor external dependencies

- Reinforce the response to an incident with regular practice

- Turning postmortem information into real action

One last idea

Time off is rarely a sudden event. It’s a slow accumulation of invisible signals, disconnected systems, and ignored risks.

What seems surprising is often the result of a missed connection.

IT teams that go beyond high-level monitoring and focus on a deeper understanding of the system will stay ahead. Because in reality, the goal isn’t just to react during a break—to see it coming before it happens.